The Robots Are Coming. Someone Has to Deploy Them.

I have spent the majority of my career helping enterprises learn how to operationalize the next “big thing” in pursuit of their strategic and financial goals. That has often meant rethinking how work is orchestrated, how products are built, and how markets are engaged and scaled. The bleeding edge of operationalized technology, which is a more boring place than the bleeding edge of technology itself, refers to the technologies that have been embraced by the early adopters and are ready to cross Geoffrey Moore’s proverbial chasm to be picked up by the majority that follows. In this space, someone has to figure out who owns the asset, who fixes it at 2 a.m., who signs the safety attestation, how to orchestrate change so the people living in the status quo do not reject the new tool, and how to be compliant before the regulators have even drafted the rules. For the last twenty years, I have built a career around solving these problems for the early adopters, paving the way for the majority that follows.

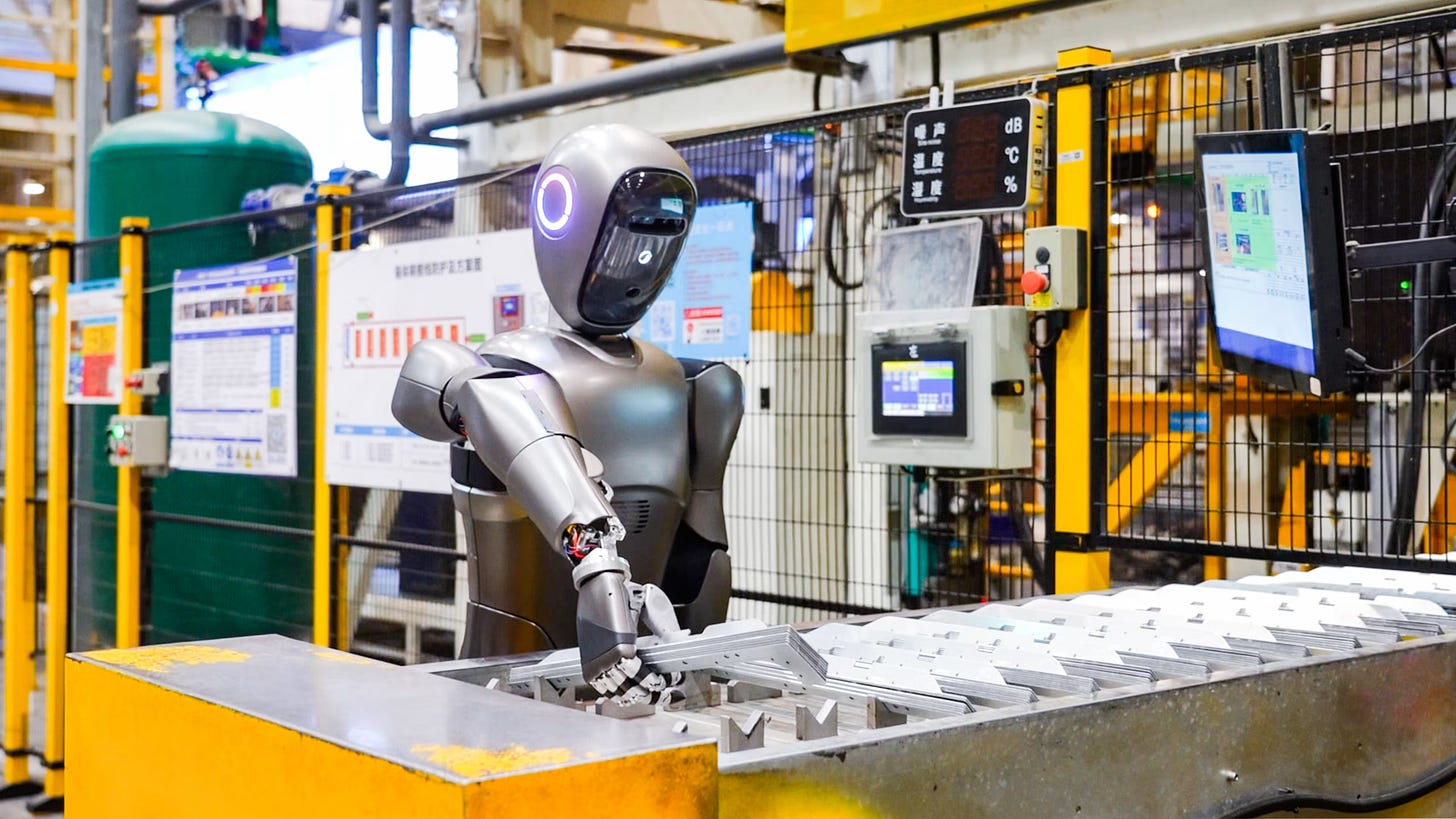

The next big opportunity and challenge in that space is autonomous robotics and humanoids.

Some may see “humanoid” and react with thoughts of science fiction and a future that will never come. If that’s you, let me be the first to shine light on what may be an uncomfortable reality: the robots are coming, and fast. In fact, in areas, they’re already here. The pilots are running in companies you know, the governance committees exist, the disappointment is starting to show up in the post-mortems, and the second wave of more grounded use is forming. Humanoids are sitting roughly where generative AI was in 2022. The demos are impressive, the funding is flowing like cheap beer at a frat party, the founder narratives are sweeping, and the gap between what is being said about the field and what enterprises will actually have to do with it is the widest it will be for the rest of the cycle.

That gap is where I thrive and what I’ll be learning about over the next several months. I have decided to make that journey public, and I hope that you’ll be interested in learning alongside me.

I should be honest about where I’m focused and where I’m not. I am a used-to-be software and systems engineer, and I lead an enterprise strategy and modernization practice for a mid-tier consulting firm. My day job is helping large organizations change how they operate, adopt new technology, and make those changes work with legacy systems, compliance and regulatory regimes, and human behavior. I have a broad view of how technology gets bought, deployed, and either survives or does not across industries, enterprise scale, and geography.

I am not a roboticist. I do not build hardware. I do not work on AI model internals. The people who do those things will be the source of my research. I will be reading them, not pretending to be them. My contribution is the part that visionaries tend to undersell: how decisions get made inside a Fortune 500, how workflows need to evolve to maximize the return of new technologies, developing change strategies so that new initiatives don’t die the minute things get hard; essentially, ensuring that new technology survives contact with operational reality.

The reason I want to start now, and not in two years when the case studies get sanitized, is that the early framing of a technology cycle tends to set the terms of the later debate. If the only voices in the room during the orientation phase are vendors and roboticists, the questions enterprises end up asking later get shaped by people who have never had to write a deployment readiness review. I would like there to be at least one voice in the early record preparing the industry for success with enterprise adoption, even if (especially if) that voice gets some things wrong.

Let’s be clear: I will get things wrong. I will break things. I have always broken things. I break things so you don’t have to. This is exploration, not canon. I will be wrong in public. I will publicly update. When I change my mind about something I wrote in week three, I will say so in week eleven, and I will name what changed and why. The implicit deal I want to make with you is that I take the writing seriously enough to be embarrassed by parts of it later, and I will not quietly delete the embarrassing parts. That, and I promise to do the actual writing. It will be sloppy, there will be grammatical errors, but what you won’t get from me is AI slop.

Here are a few things that I am not going to do.

I am not going to make sensational predictions about timelines or how many jobs disappear by what year. The only things worse than AI slop are clickbaity articles and fear mongering. I am not going to take vendor money to write any post in this series. I am not going to act as a marketing channel for any specific company’s robot, even the ones I find interesting. And I am not going to claim expertise I do not have. When I get to a question that requires robotics or AI internals knowledge, I will name the limit and quote someone who knows. I may even ask an expert to co-write with me.

What I will do is read carefully, watch the field, and write to answer questions that an enterprise would want answered if a CIO walked in tomorrow and asked whether to pilot one of these things. I will explore how a humanoid program may get funded, what first experiments could look like, how ROI could be measured, what a first deployment looks like in a warehouse, a plant, or a hospital. How procurement, safety, and labor reshape the pace of adoption. What separates a pilot that scales from a pilot that becomes a permanent demo. I will also discuss what should be monitored at the horizon 3 level, how to move those learnings into a horizon 2 experiment, and how to prepare to extract value from the new tech as it becomes operationalized in horizon 1.

Some of those posts will read like field notes. Some will be reactions to a specific thought that blew up, some will be short-form videos, and others will be questions I cannot yet answer, written down so I have to come back to them.

The field is moving fast, the resistance is already rising, and the people who will eventually have to make real decisions about humanoids are mostly focused on AI and not yet in the conversation. I would like to be a library ready by the time they show up.

-AM

Views are personal and do not represent any employer, past or present.