The Only Way to Understand AI Is to Build It

Trail running. Vector search. And the lessons buried in doing hard things well.

There’s a particular kind of fraud you can commit in a leadership role. It’s quiet. Nobody calls you on it directly. You sit across the table from engineers, vendors, and product teams. You nod at the right moments. You ask the questions that sound sharp. And the whole time, you have no real idea what’s actually happening under the hood.

I’ve been that person. More recently than I’d like to admit.

Last week I went to ServiceNow K25. Smart people everywhere. Agentic AI on every slide. I walked away with one feeling I didn’t expect: I had gotten soft. Not strategically. Technically. In this environment, that gap isn’t a personality quirk. It’s a liability.

So I went home and built something.

No infrastructure team. No vendor. No budget. A MacBook Pro, a blank terminal, and a clear intention to learn by doing without losing my temper in the process.

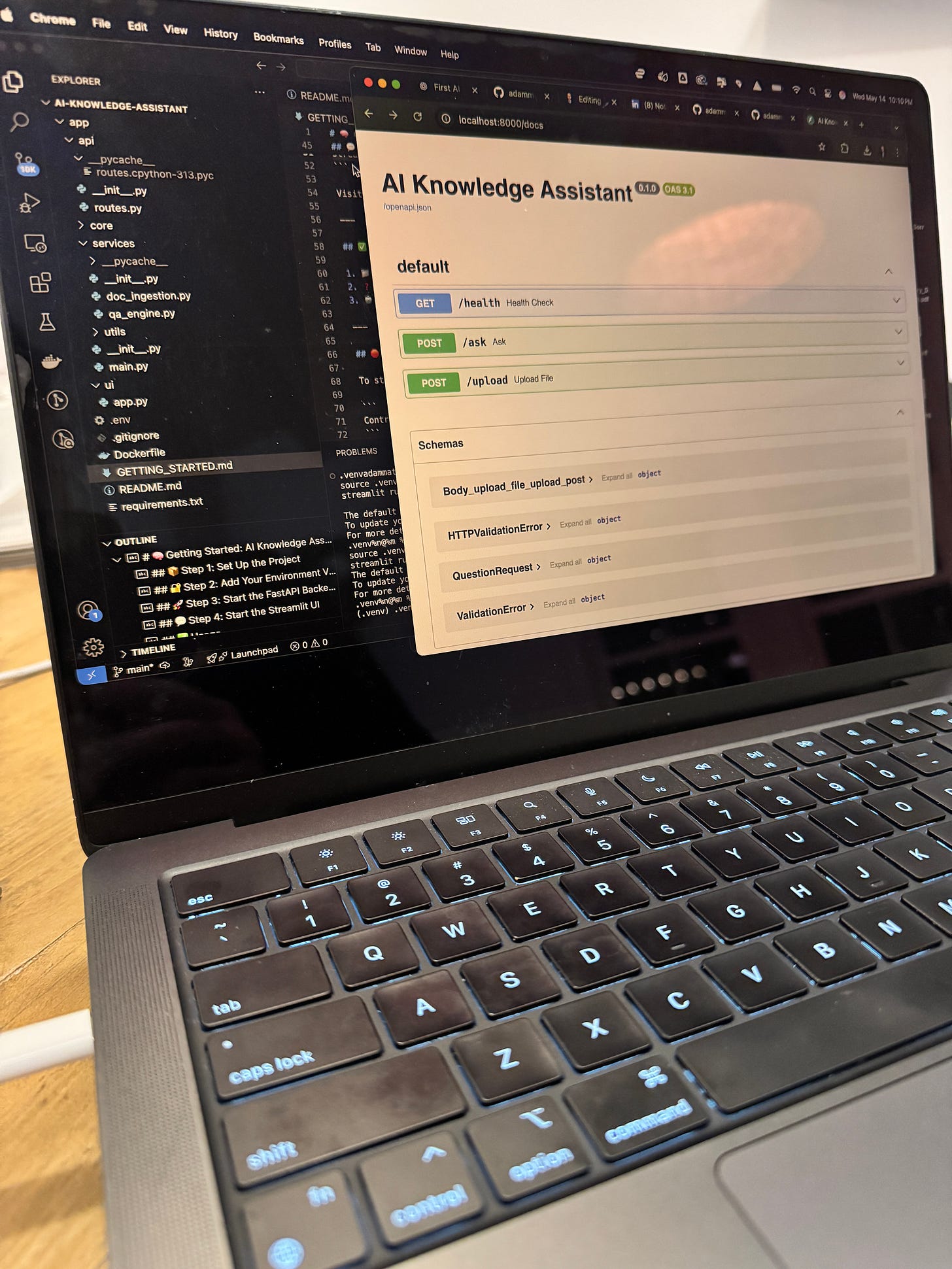

I built a working AI microservice. It takes a document, remembers it, and answers questions about it using context-aware reasoning from GPT-4. FastAPI for the service layer. OpenAI for embedding and generation. Pinecone for vector search. Streamlit for the front end. I ran the whole thing locally, and it worked.

That’s not the point of this piece. The point is what happened to my thinking when I stopped reading about AI and started building with it.

Why Leaders Stop Building

There’s a rational explanation for why experienced executives drift away from technical depth. Time is finite. Specialization is efficient. You hire people who build so you can focus on what only you can do.

That logic holds until the technology moves fast enough that not understanding it starts compromising your judgment. We crossed that line a while ago. Most organizations just haven’t felt it yet.

Trail running has taught me something about this. Miles from the nearest person, legs burning, no audience, no metrics worth posting. The clarity that comes from that kind of discomfort is different from anything you get in a conference room. It’s earned. It doesn’t lie. And it compounds.

Technical depth works the same way. You don’t get it from a webinar. You get it from reps.

What the Build Taught Me

The architecture behind a functioning AI system is not mysterious. But the moment you’re responsible for making it work, a dozen things become obvious that no trend report would have told you.

Vector search is where the real capability lives. Generative AI without a mechanism for retrieving relevant context is, as I wrote in my notes, essentially theater. Embedding documents into a vector store and pulling the most relevant chunks for a given query is what transforms a language model from a parlor trick into something that behaves like a knowledge worker. Seeing that work at even small scale, stepping through the logic in Visual Studio, made an entire category of enterprise use cases click in a way that reading about them never had.

The modern stack is composable in a way that changes what’s actually possible. OpenAI is not a monolith. Pinecone is not a platform. Each component is a service you integrate on demand. The real skill isn’t knowing how to build everything. It’s knowing which components solve which problems and how they scale. That understanding only comes from having assembled the pieces yourself.

Friction is diagnostic. The bugs I tracked down, the broken routes I traced, the points where the system behaved in ways I didn’t expect: those moments told me more about where enterprise deployments fail than any post-mortem report I’ve reviewed. The gap between “this worked in the demo” and “this works in production” lives exactly in the places where I lost an hour on a Saturday afternoon.

The Case for Getting Your Hands Dirty

If you’re making decisions about which AI capabilities to invest in, which vendors to partner with, which architectures to approve, and you’ve never built anything yourself, your questions are operating at the wrong altitude. You’re asking about outcomes when you should also be asking about mechanisms. You’re evaluating proposals without the reference experience to know what’s been glossed over.

This isn’t about becoming a full-stack engineer. It’s about closing a specific gap that the current moment has made expensive.

When I built Valor Cycles back in 2018, I was trying to 3D-print carbon fiber racing bicycles and put an American athlete on the Tour de France podium. I tested the hypothesis with prototypes that were, to be generous, not pretty. PVC pipes. Printed lugs. The data was real, though. When it told me I didn’t have the time or capital to make it work, I could say that with confidence because I had done the work. I wasn’t relying on someone else’s assessment. I had the reps.

Same thing here. Building the system doesn’t mean you now run engineering. It means you have a reference experience your judgment can anchor to. When someone tells you vector search is overkill for your use case, you now have an opinion. When someone tells you the retrieval layer is where enterprise deployments consistently fail, you understand why.

Without that reference, you are dependent on the framing other people give you. In a fast-moving environment, that’s a structural disadvantage.

What This Requires

Not everyone needs to build what I built. But everyone leading through this era needs to close their specific version of this gap.

For some that means spinning up a local environment and building something that fails three times before it works. For others it means sitting with engineers not to receive a briefing but to ask: show me where this actually breaks. For others still it means going deep on one concrete use case until the mechanism is clear, not just the pitch.

The specific path matters less than the commitment to earned understanding over curated understanding.

There’s a reason I trail run. There’s a reason I lift heavy. There’s a reason I still build systems. Discomfort is the clearest path to clarity, and clarity repeated becomes capability.

Build something. The blind spots shrink. The questions get better. The strategy tightens.

The future won’t reward those who spectate.